The appearance of MongoDB around 2009 caused disruptions around the more traditional ways of storing information in databases. But it has certainly earned a place in the world of IT solutions, making it well-known among any technology enthusiast today. Its dynamic schema makes data integration in certain applications easier and faster.

The growth of this technology is powered by MongoAtlas (or simply MongoDB) a cloud-based database service created and maintained by the core MongoDB community. It works with hosting services like AWS, Azure, and Google Cloud to help users provision, maintain, and secure new databases for their applications.

💾 Data reaction

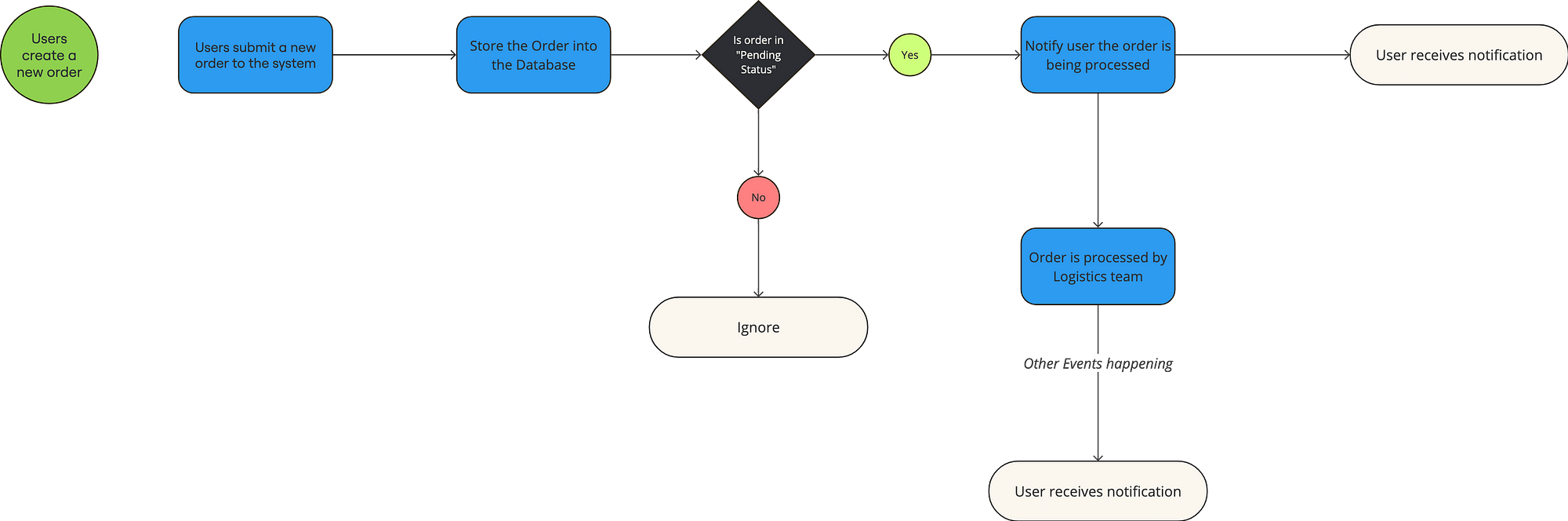

Let’s now combine the use of this database with an Event-Driven architecture. This leads us to the need to react to data changes, whether they are inserted or updated, to execute certain actions. A typical example in Event-Driven is its use in Supply Chain systems. So in a basic example consider sending a notification upon the creation of a new order. This lead us to the following diagram:

While MongoAtlas offers capabilities to achieve this situations, for example using triggers and running custom functions. This implies keeping part of the logic of our application within Atlas, which may be fine for specific cases but certainly complicates the versioning and scalability of this logic.

As the title suggests, we will use AWS cloud, particularly the AWS EventBridge service, to solve this problem in a scalable way. You might wonder why this article focuses on MongoAtlas and not on DocumentDB, which is the AWS-supported service for MongoDB databases. Well, the truth is that although MongoAtlas is also run on AWS cloud, this service offer is much more comprehensive. Its interface, ease of creating and managing clusters, log management, statistics, and indices, and the fact that the company responsible for maintaining MongoDB is the one providing support for Atlas, make it the preferred option in most situations.

In a more ‘traditional’ integration between MongoAtlas and EventBridge, we could have used triggers or cron jobs to connect data changes with an interface on the AWS side, whether it be a Lambda function or an EventBridge bus. But what I present in this article is a much easier and faster way to achieve this through a direct connection using the Partner Event Source within the EventBridge configuration. This functionality allows us to receive events from external applications on an EventBridge bus, facilitating configuration and connectivity between them.

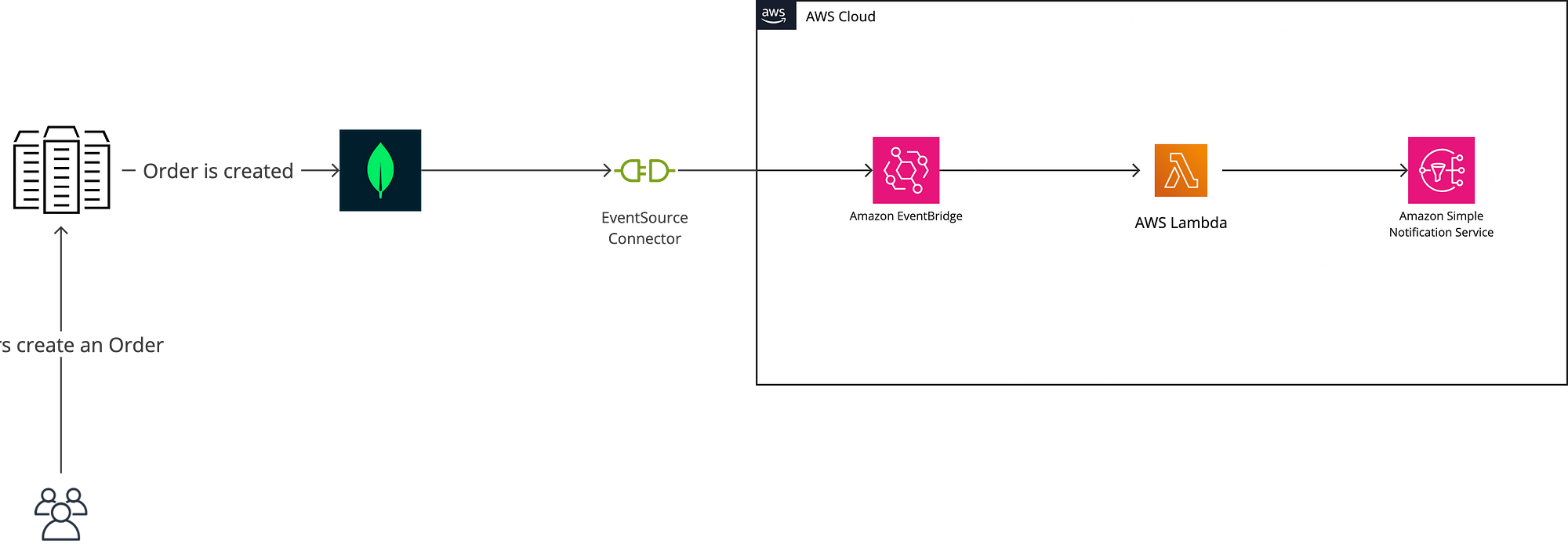

So, considering the same scenario mentioned before when a new order is inserted, our diagram will now look like this

🫳 Hands On

Let’s get our hands a little dirty and make this connection possible. Let’s do it step by step.

Pre-Requirements

- You will need to have an account in MongoAtlas as well as a new project and Cluster where you will store your application data and where you want to react to events. If you are just exploring, don’t worry, all of this is free.

- An AWS account with access to EventBridge to configure the other side of our connection and receive the associated events.

Set Up the MongoDB Partner Event Source

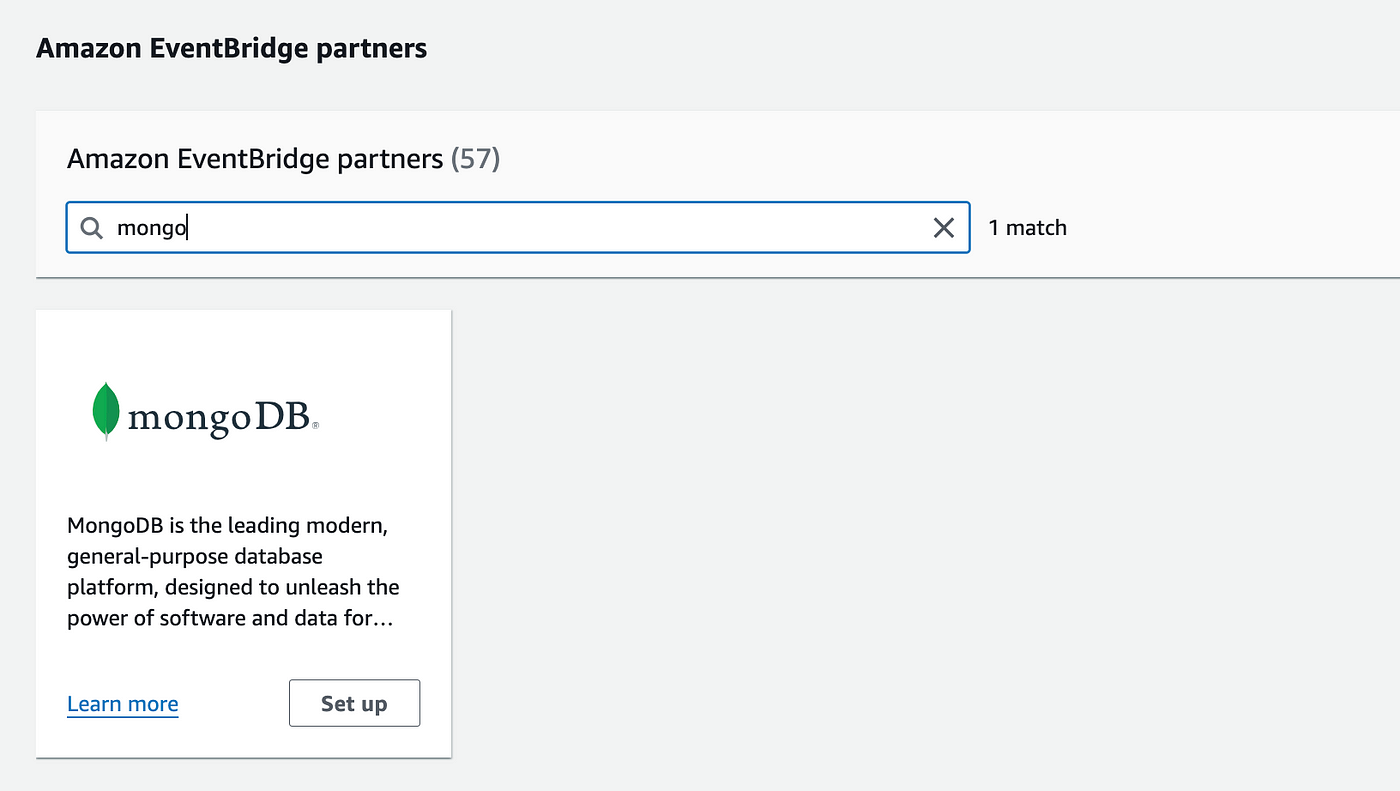

To send trigger events to AWS EventBridge, you need the AWS account ID of the account that should receive the events. Open the Amazon EventBridge console and click Partner event sources in the navigation menu. Search for the MongoDB partner event source and then click Set up.

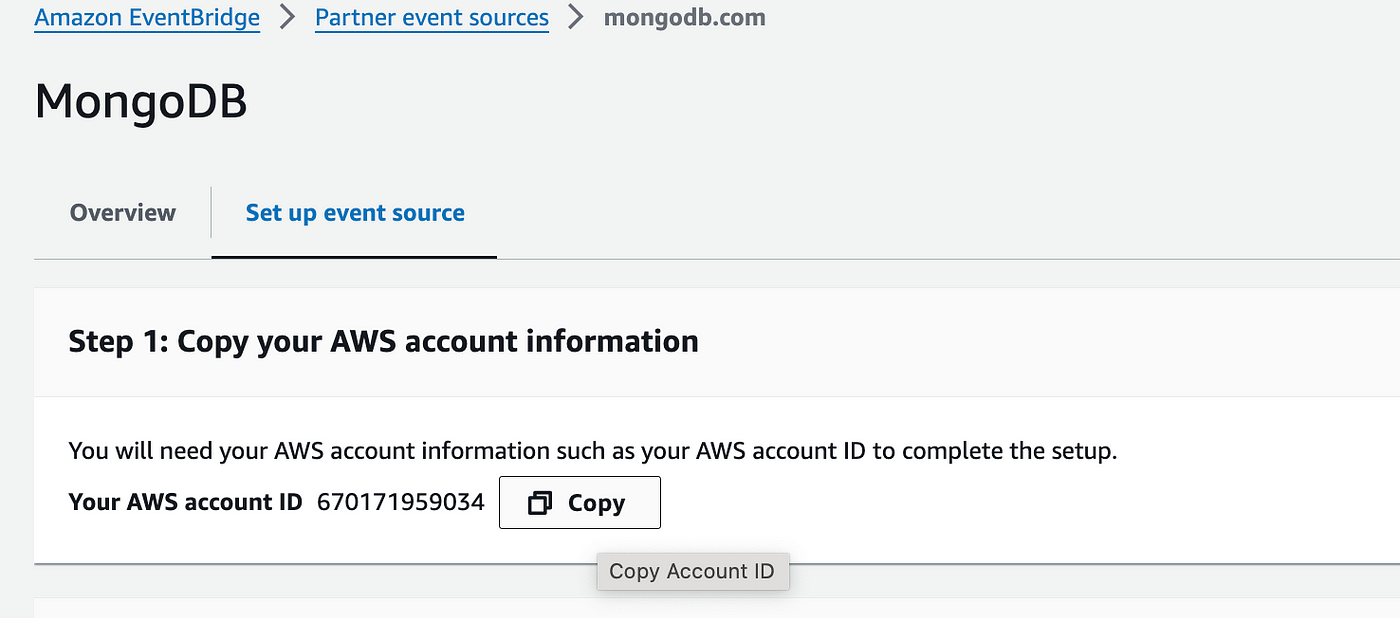

On the MongoDB partner event source page, click Copy to copy your AWS account ID to the clipboard.

Configure the Trigger

Once you have the AWS account ID, you can configure a trigger to send events to EventBridge. Let’s move to the MongoAtlas dashboard and create a Cluster and a new collection for this.

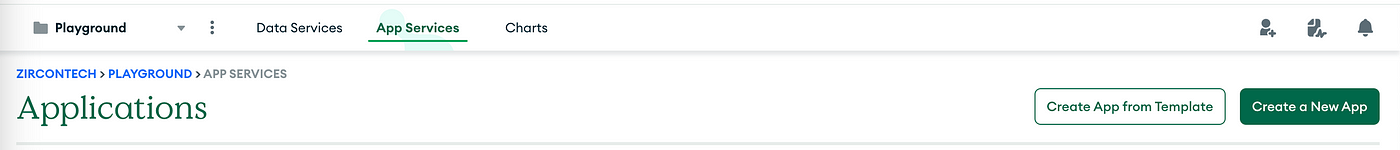

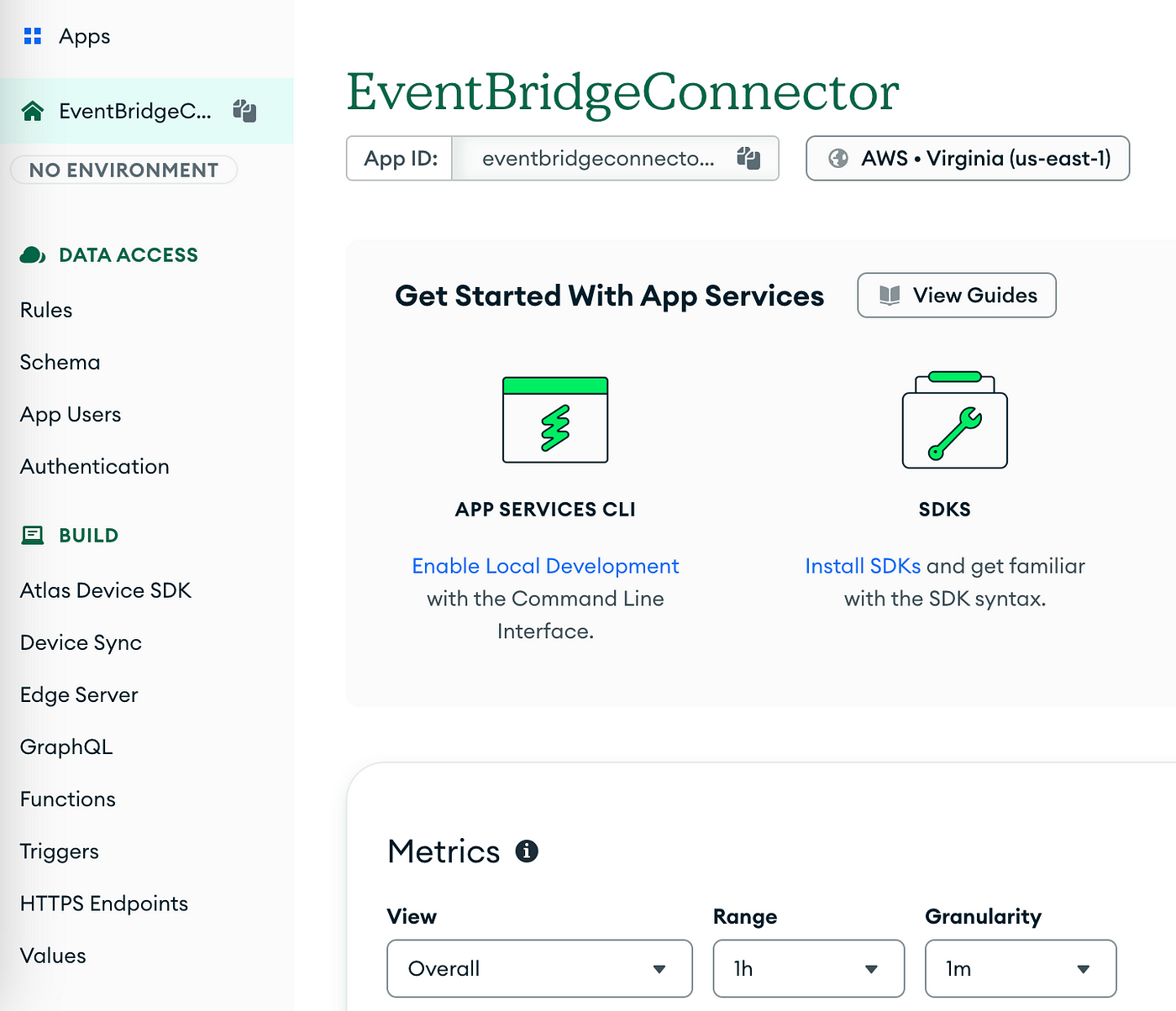

Once done, you’ll need to go to the AppServices tab and if it’s your first time there, you’ll be asked to create a new App. Pick a name value and leave the default values and you are ready to go.

In the side panel, find the “Triggers” menu and click it. Then select to “Add a Trigger”

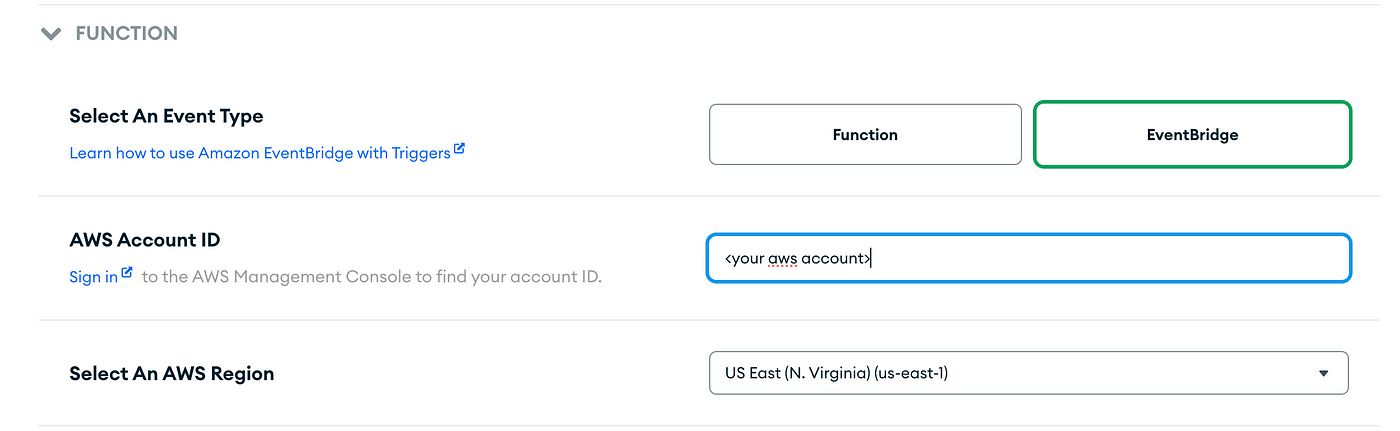

Pick a name, choose your cluster and collection and leave the default values except for the Function section, where you’ll have to select “Event Bridge” connector and specify the AWS Account ID obtained before.

Save and then Deploy this trigger (yes, this is a two step creation).

Configure the Event

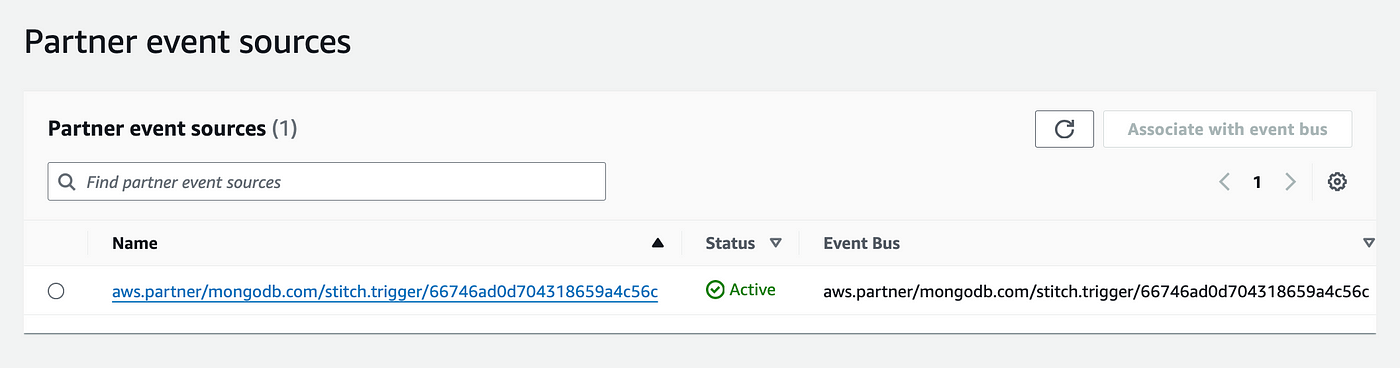

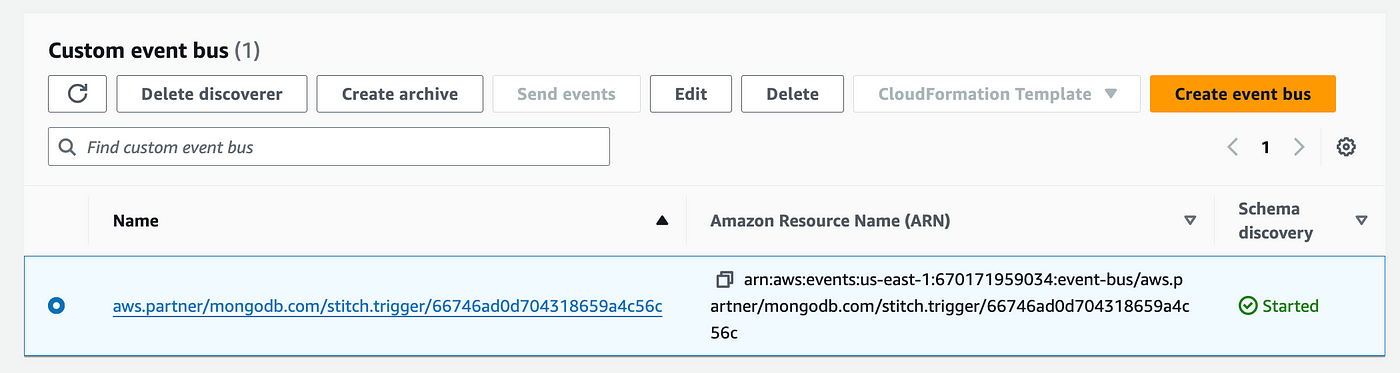

Back to AWS side, the section under the Partner event sources dashboard, you should now see a request from Mongo to establish the connection. Associate to the event bus and confirm.

This automatically creates the event bus but you’ll need and extra action. Back to the event bus list, find the newly created record under Custom Event Bus and select “Start Discovery”. This should effectively make the connection active for new events.

Configure the rule

Event buses won’t do much without a rule that connects the Event Source with a Target. Therefore we’ll have to create a new Rule.

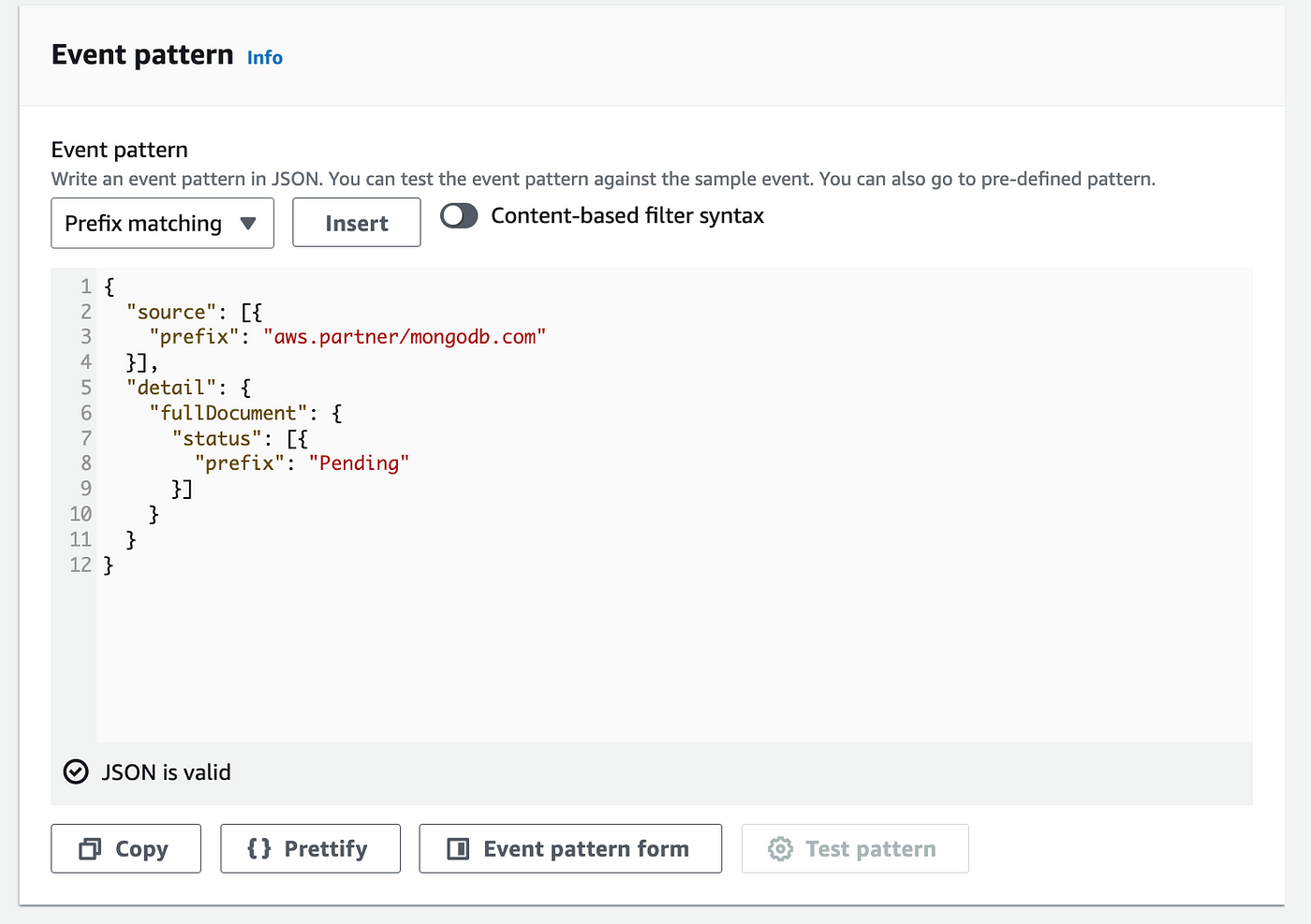

Follow the first step entering the basic details (name, description, event-bus) and in the second step define the Event Pattern for your rule where you can filter only events that are of interest. In this case, we’ll only take those orders that are in “Pending” status, meaning they were just created.

The source entry indicates the source is indeed a Mongo event.

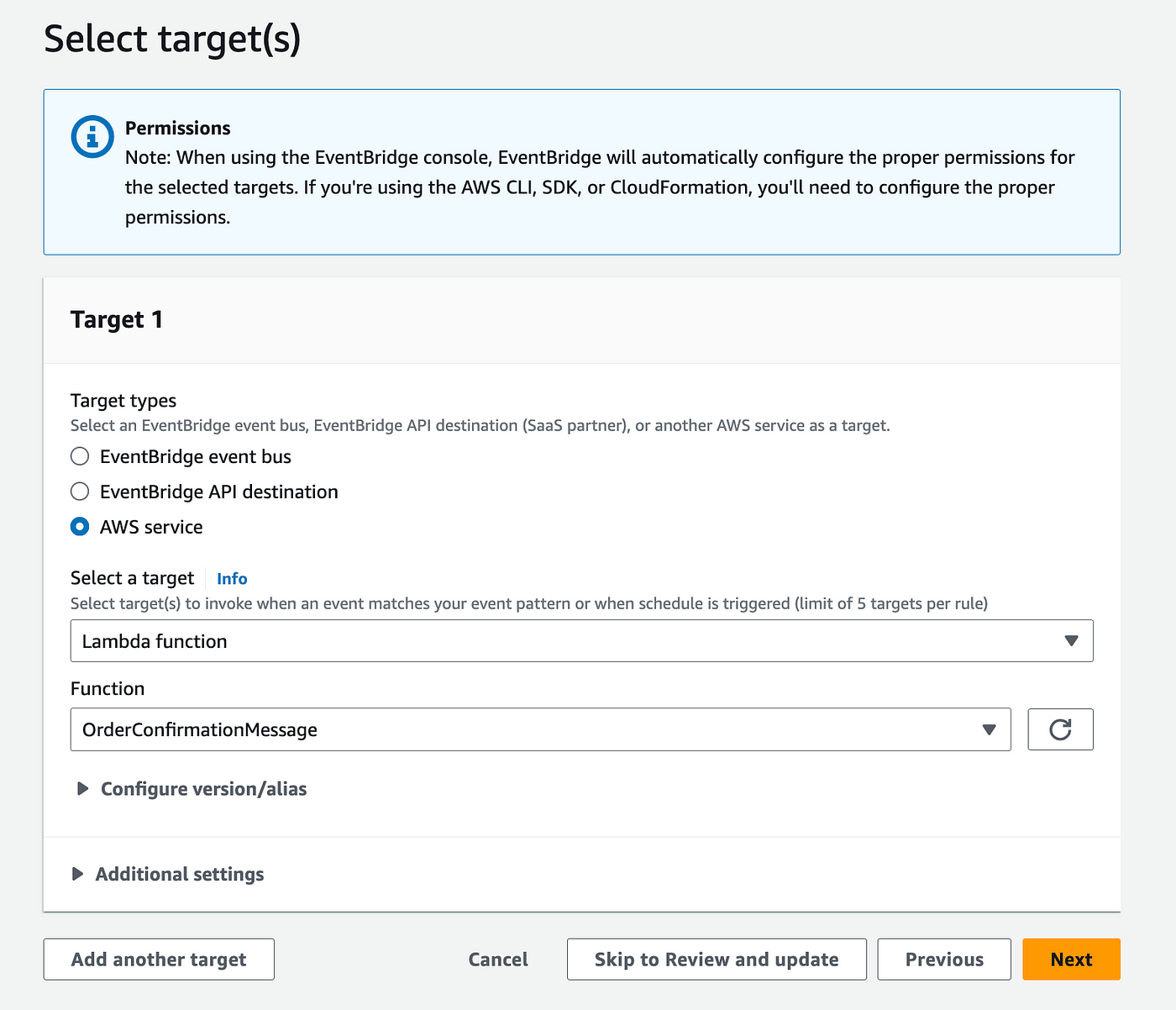

Now in the target section you’re open to move forward with the flow as you prefere. In this case we’ll use a Lambda function to format a Notification message and then publish the message to an SNS topic in charge of delivering an SMS.

Receiving the event in Lambda

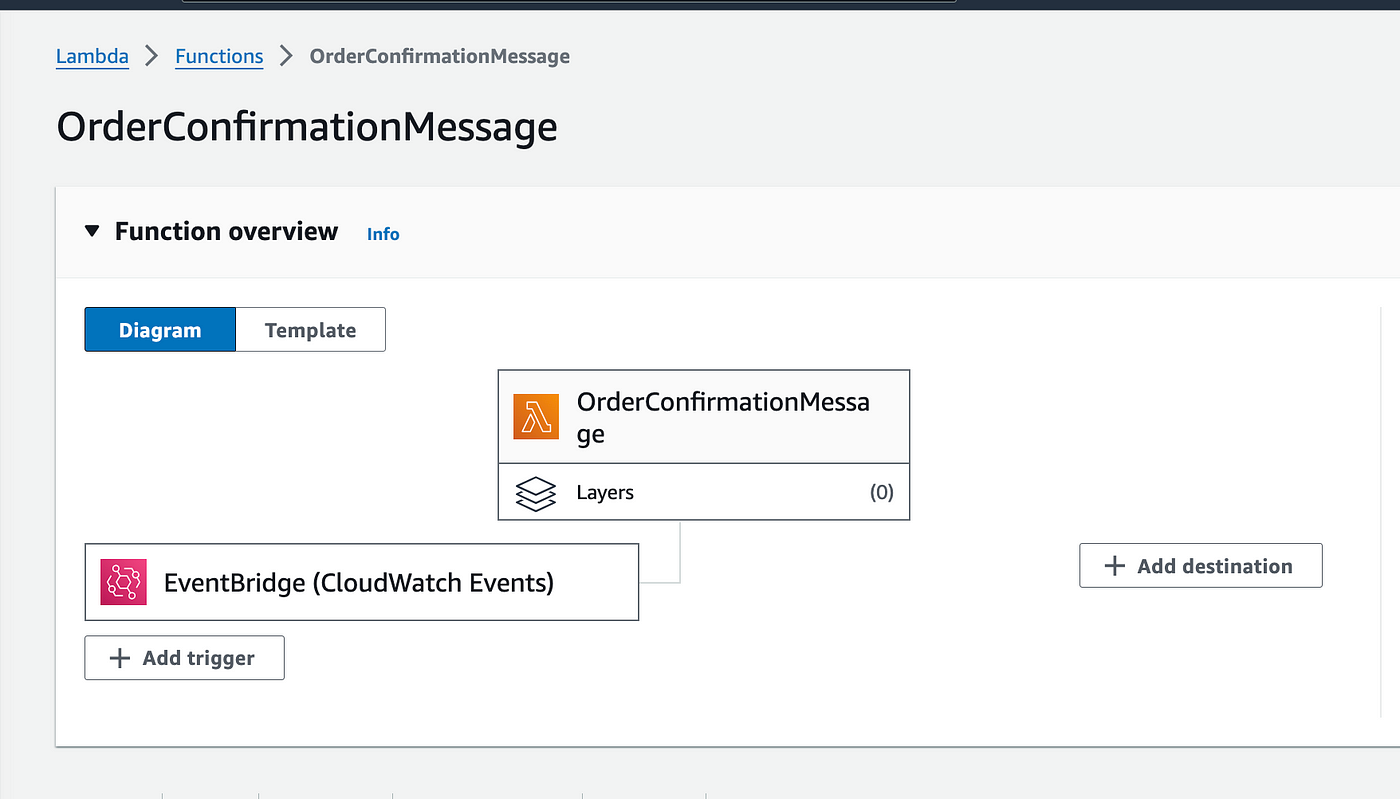

To close the loop of this process, let’s create a Lambda function (which you’ll then set as the target of the EventBridge rule).

It is important that you give your lambda permissions to publish message in SNS.

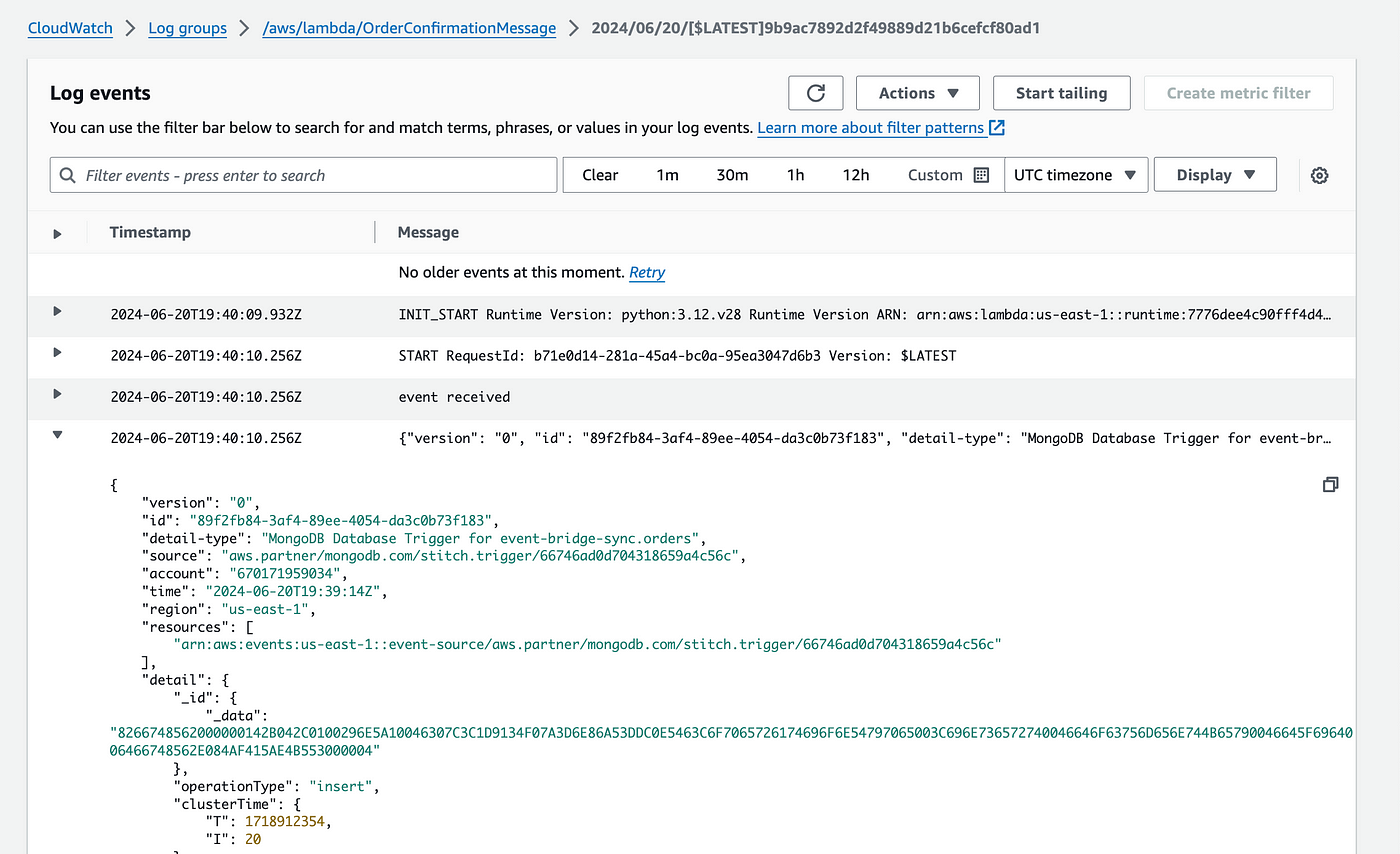

To keep it simple, this lambda funcion will receive the event payload, which includes the default schema from EventBridge plus the details of the newly created Mongo document, and publish a notification to SNS.

Here’s how this code looks like in a python version:

import json

import boto3

TOPIC_ARN = '<YOUR_SNS_TOPIC_ARN>'

def lambda_handler(event, context):

print("event received")

print(json.dumps(event))

# Extract the customerPhone from the payload

order = event['detail']['fullDocument']

customer_phone = order['customerPhone']

## This scenario assumes the user's number is already subscribed to the SNS topic

## But this Lambda could also subscribe the user to the SNS Topic

order_id = order['_id']

# Send a text message through SNS

sns = boto3.client('sns')

sns.publish(

TopicArn=TOPIC_ARN,

Message='Your order is being processed. Order ID: ' + order_id,

)

print("Message sent!")

return {

'statusCode': 200

}

Testing the flow

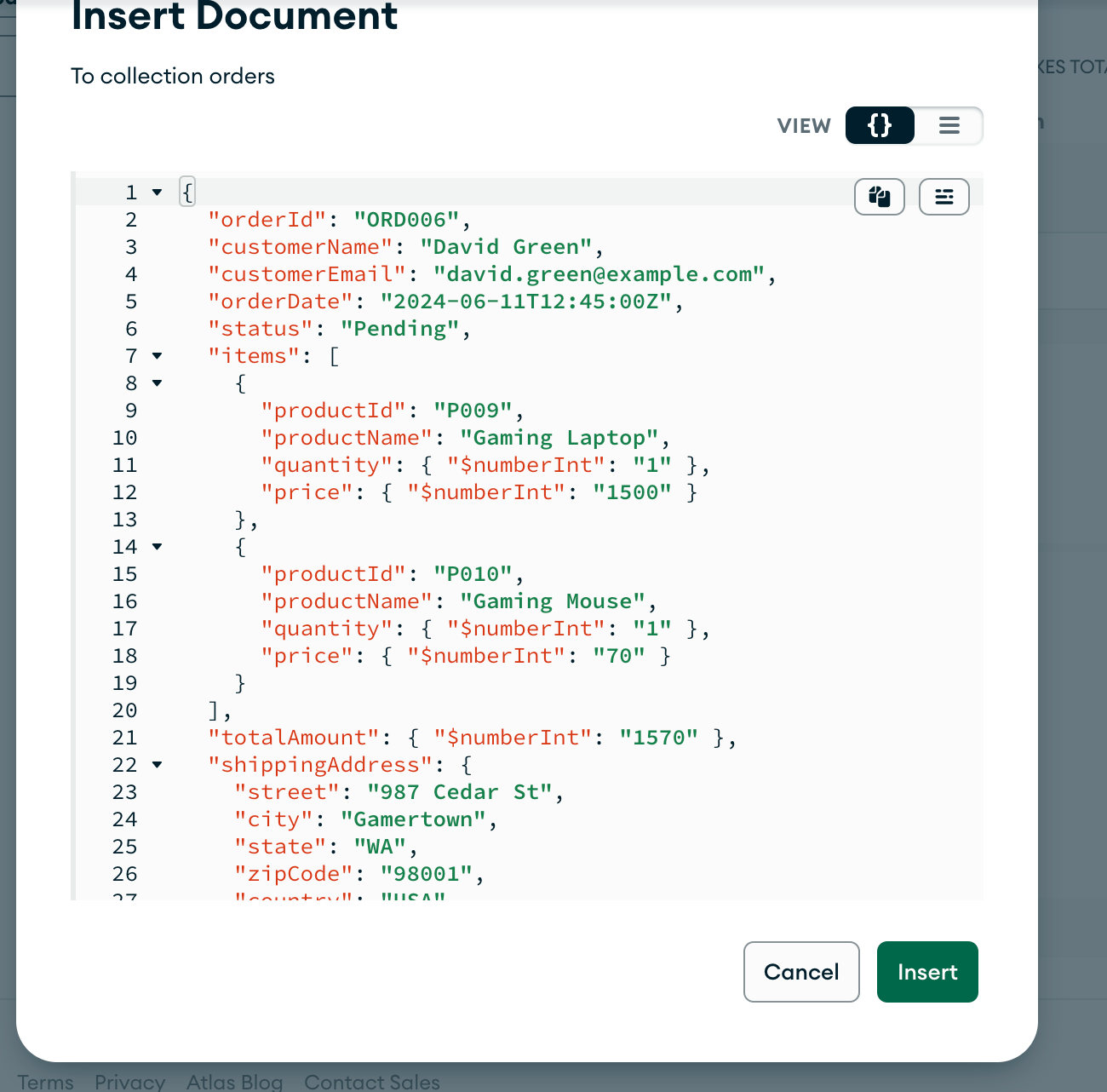

To test the flow, let’s manually insert a new Mongo record, which in a more realistic scenario should be done for example through an API service.

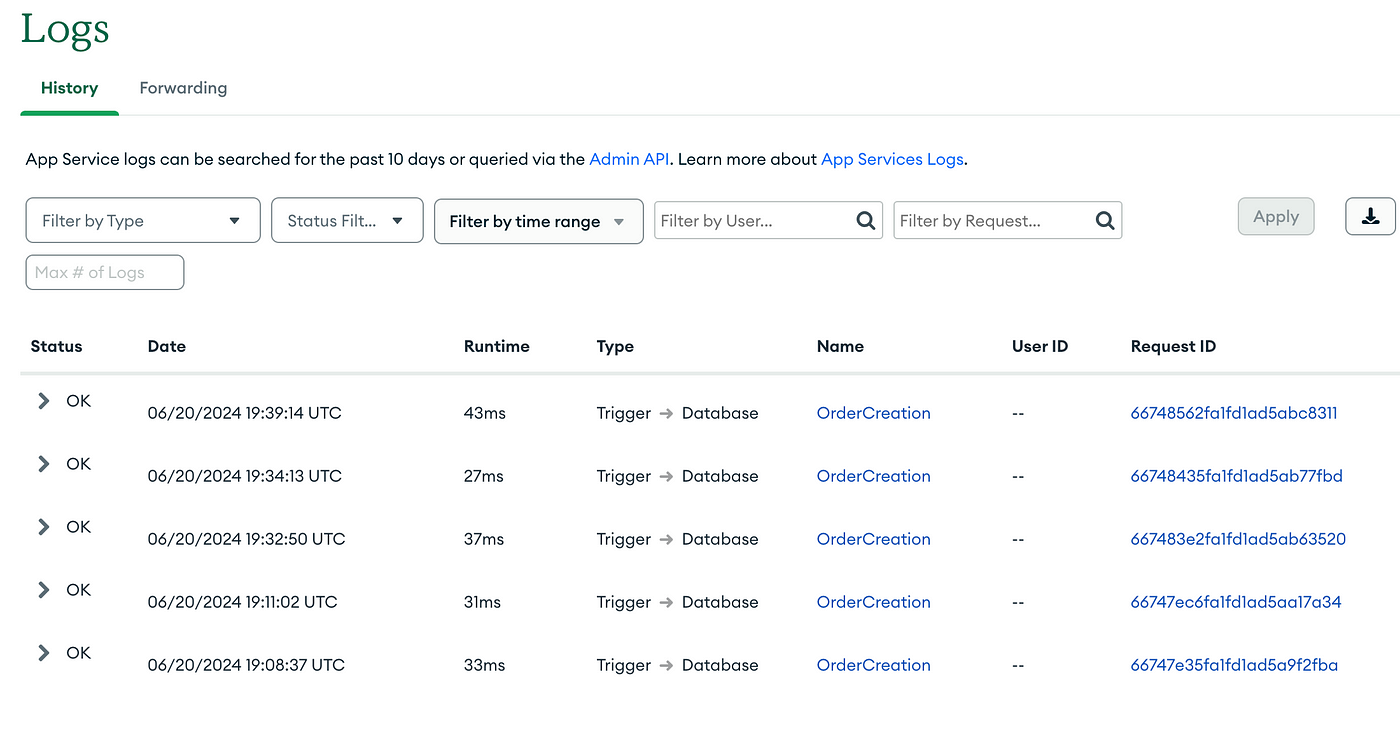

Once done, let’s confirm every part of the flow is doing its job. If you go to the Logs tab within the Atlas dashboard, you should find a new entry showing that the Trigger was effectively executed.

Now, back to AWS this event should have went through our EventBridge bus, matched the Rule’s schema and reached lambda for processing. If everything looks correct the Lambda function logs should show the received event was processed. This all process should be instant.

In case you also connected this lambda to an SNS topic, the message should have been published and you’re user should have received a notification.

❓Why does it work?

So far we’ve seen the abstracted result of this implementation, but what’s happening behind curtains?

MonboDB provides in Atlas the concept of Atlas Triggers, that can execute application and database logic. Triggers can respond to events or use pre-defined schedules.

Triggers listen for events of a configured type. Each Trigger links to a specific Atlas Function. When a Trigger observes an event that matches your configuration, it “fires”. The Trigger passes this event object as the argument to its linked Function.

A Trigger might fire on:

- Database triggers respond to document insert, changes, or deletion. You can configure Database Triggers for each linked MongoDB collection.

- Authentication triggers respond to user creation, login, or deletion.

- Scheduled triggers execute functions according to a pre-defined schedule.

Our use case focuses on Database triggers, where most of the action happens but know that can be extended for other purposes.

💡What else we can do?

While the purpose of this doc is to show the smooth and easy integration between MongoAtlas and EventBridge you can expand this base to any number of use cases. If you are currently using MongoAtlas for your project, have into consideration that this configuration can be used to facilitate some flows that otherwise may be resolved by adding too much code, complexity or unreliable resolvers. Furthermore EventBridge can dispatch events to almost any service in AWS.

References

- https://www.mongodb.com/docs/atlas/app-services/triggers/

- https://www.mongodb.com/docs/atlas/app-services/triggers/aws-eventbridge/

- https://docs.aws.amazon.com/eventbridge/latest/userguide/eb-saas.html

If you’re looking to explore cutting-edge blockchain technologies for your projects, ZirconTech is here to help. Our expertise in blockchain and decentralized applications ensures we can deliver innovative solutions tailored to your needs.

Feel free to reach out for more information or to discuss how we can assist with your next project.

That’s pretty much it. Thanks for reading! 📖

You can follow me on Twitter and check other posts at ZirconTech for another dose of cool tech stuff.

Cheers!

Will